AI infrastructure, IBM and AMD have joined forces to deliver cutting-edge computing power to Zyphra, a San Francisco–based open-source AI research company.

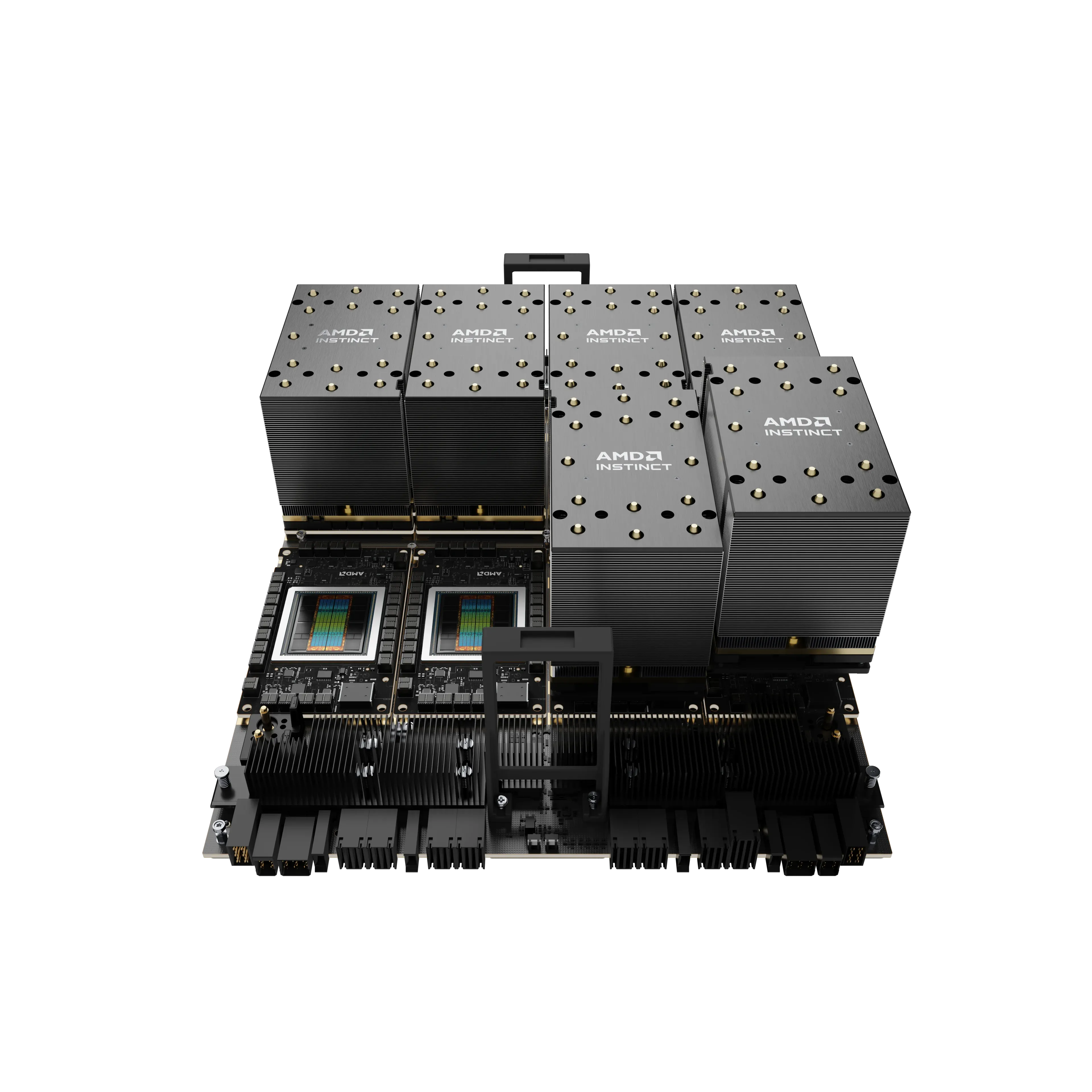

This collaboration aims to push the limits of generative AI by providing Zyphra with one of the largest AI training clusters ever built, powered by AMD Instinct™ MI300X GPUs and hosted on IBM Cloud.

The multi-year partnership positions IBM to deliver scalable, high-performance AI infrastructure that supports Zyphra’s development of frontier multimodal foundation models, capable of understanding and generating language, vision, and audio.

These models will power Maia, Zyphra’s general-purpose “superagent” designed to enhance productivity for enterprise knowledge workers.

Accelerating the Future of AI Infrastructure

This collaboration marks the first large-scale deployment of AMD’s full-stack training platform including compute, networking, and acceleration on IBM Cloud.

The combination of IBM’s secure, enterprise-grade cloud and AMD’s leadership in AI acceleration creates a new benchmark for performance, efficiency, and scalability in AI training.

Zyphra’s rapid rise capped by a $1B Series A valuation underscores its ambition to become a leader in open-source superintelligence. With IBM and AMD’s infrastructure, Zyphra gains the ability to train increasingly advanced models faster, while maintaining flexibility and cost efficiency.

Executives from all three companies highlight this as a step toward a new era in AI: one where open collaboration, scalable infrastructure, and cross-industry partnerships accelerate real-world innovation.

As AI models grow larger and more complex, collaborations like this will define how the next generation of intelligent systems are built not just by computing power alone, but by the strategic alignment of cloud, silicon, and open science.